Stable Diffusion

Thoughts on Stable Diffusion

29 August, 2022

About a week ago was the release of Stable Duffusion, another neural network that makes beautiful 512x512 pixel pictures out of noise based on your text query up to 300 characters. On any text, in any style. Any objects, people, animals, places, times and events. Roughly speaking, this script takes whatever fits your description best and mixes in one image of many, many more — from billions of source images (still this is not true, as it learns from images, but not reproduces them as is!).

You can also use your sketchy picture or photo as a source and get a more or less complete illustration with what you need, in the style you like.

Why would you want to do that? I think it's obvious: to speed up workflows. Show the client sketches of the house in a format he understands — with the right light and the right materials. Put any actor in any movie. Draw the basis of an illustration, process it in the right style and only add details. Get a free banner in any style for Facebook ads in just a minute.

The most curious thing is not the release itself, although SD clearly has higher quality than previous generators (hello, Dall-e2, you're obsolete), and not even the possibility of local launch on your computer, although previously this required separate servers with video cards for 3-5 thousand dollars and dozens of gigabytes of RAM: now any laptop even with a five-year-old Nvidia with 4-6gb will suffice (it will suffer, but it will cope).

I'm curious about two other things:

1. A couple months ago we had a paid Dall-e2 and it seemed fine, but censored and crooked; then Midjorney came along and everyone was excited because you can generate popular characters there (except me — too obvious the handwriting of a website-trained AI like artstation).

The advent of SD feels like the Big Bang. All the source code is open source, free, anyone at home can use it. And the community in just a couple of days has built a lot of additional tools around the original product: connected filters for processing and quality improvement, reduced memory consumption, ported to macs (while the developers promise, I'm already using it), wrote GUIs, plugins for photoshop, krites, gimp, figma — it didn't take a week. What's going to happen in a year?

2. There are probably tens of thousands of people who know about it now, and thousands are using it. The picture database includes nsfw, actors, artists, politicians, Muslims, Orthodox, and probably just your instagram pics — billions of images!

Locally, users are already in the midst of doing things that some groups of people would easily find offensive. What will happen when they learn of the existence of such a great tool? Actors will sue, politicians will start banning. But will they be able to?

To me, this looks like the beginning of a new technological revolution that will not only leave some people unemployed, but also provoke a mass of ethical discussions.

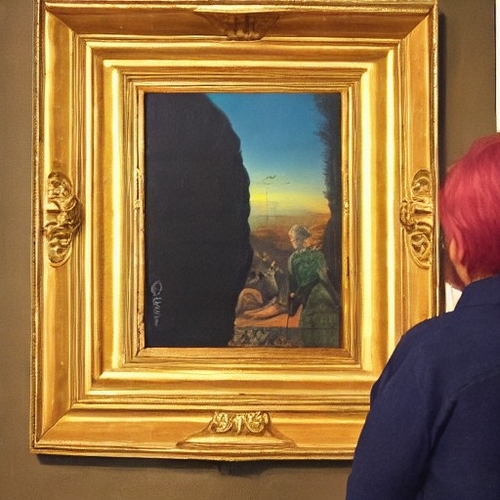

Some examples from me: